The first major AI-supply-chain attack of the agentic era happened this weekend, and if your team uses any third-party AI tool connected to Google Workspace, GitHub, or Slack, you should assume you are already inside the blast radius.

The headline is that Vercel disclosed a security incident on April 19. The real story is how it happened. The initial access vector was not a zero-day, not a misconfiguration, not a rogue insider. It was a third-party AI tool called Context.ai, with OAuth scopes into a Vercel employee's Google Workspace account that it probably should not have had. Vercel's CEO, in his public statement, suspects the attacker was "significantly accelerated by AI."

The defender was breached by AI, through AI, and at AI speed. That is the pattern. Vercel just happens to be the first big name to put that pattern on a status page with their CEO's signature attached. It will not be the last.

We are building CreateOS as AI-native deployment infrastructure, so this incident has our full attention. This post is not a gloat. Vercel handled disclosure better than most companies in their position would have. What follows is what the incident actually tells us about the next two years of application security, and what to do about it this week.

What actually happened

The sequence, as Vercel has publicly described it and CEO Guillermo Rauch laid out in a thread on X on April 19:

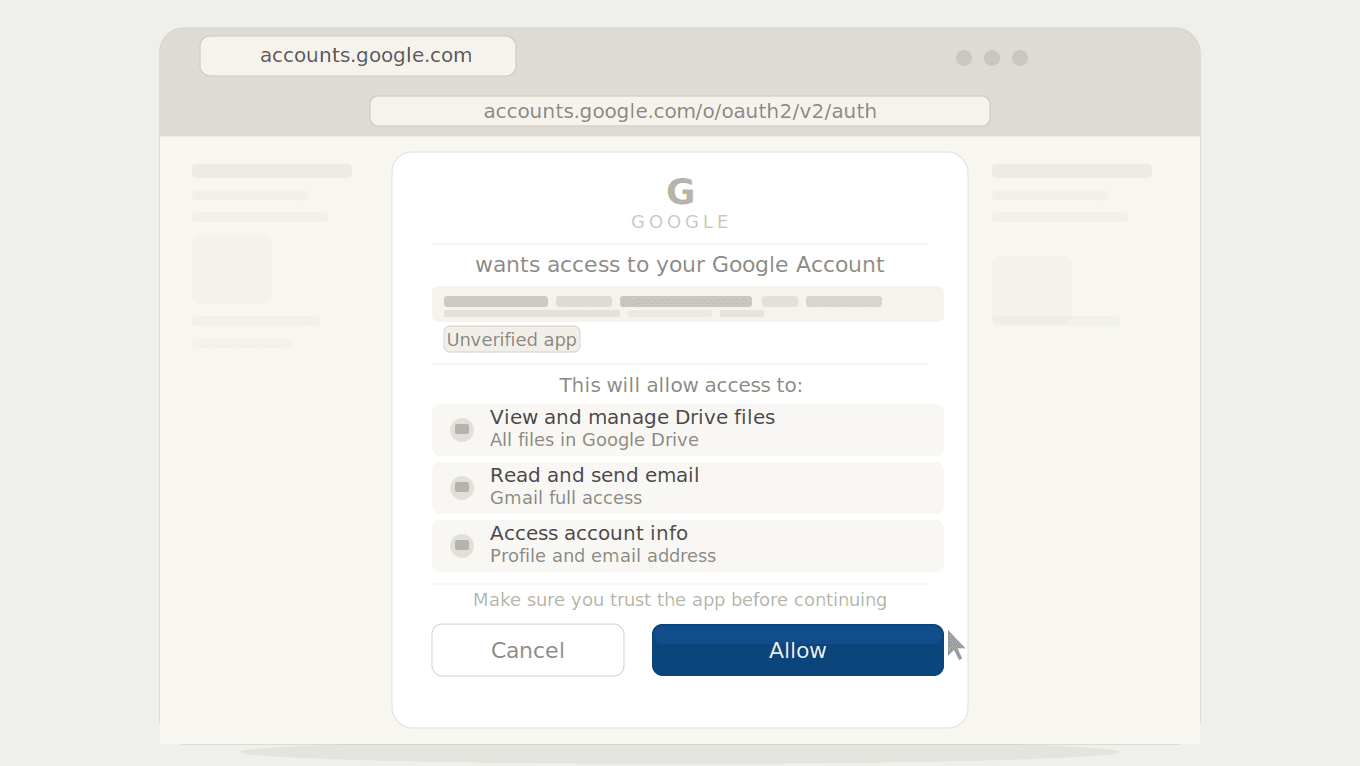

Context.ai, a third-party AI platform used by a Vercel employee, was breached. The specific compromised artifact is the Google Workspace OAuth application associated with Context.ai.

Through that compromised OAuth app, attackers took over the Vercel employee's Google Workspace account.

From the Workspace account, they pivoted into some Vercel internal environments.

Once inside, they enumerated environment variables that were not marked as "sensitive." Variables marked sensitive are encrypted at rest and Vercel says there is no evidence those values were read. Non-sensitive variables were readable by design. That is the feature, not a bug.

A BreachForums listing subsequently appeared offering Vercel data, including access keys, source code fragments, employee accounts, GitHub tokens, npm tokens, and internal database records, for two million dollars, with a first payment of five hundred thousand in Bitcoin.

Vercel has engaged Mandiant, notified law enforcement, and shipped dashboard improvements for environment variable management. They have confirmed that Next.js, Turbopack, and their open source projects remain safe after a supply chain audit. If you consume those packages, you do not need to pin to a pre-incident version. Monitor the bulletin for updates, but the release path appears clean.

A few things are still claim, not fact. The BreachForums poster used the ShinyHunters moniker. Threat actors historically linked to ShinyHunters have denied involvement to BleepingComputer and Cyberinsider. Attribution is contested and probably will remain so. The two million dollar figure is the listing price, not a confirmed ransom demand. The precise scope of what was exfiltrated, especially around npm tokens, GitHub tokens, and internal Linear data, is what the BreachForums actor claims, not what Vercel has confirmed.

One specific indicator of compromise that every Google Workspace administrator reading this should search for right now, per Vercel's bulletin:

OAuth App: 110671459871-30f1spbu0hptbs60cb4vsmv79i7bbvqj.apps.googleusercontent.com

If that app appears in your Workspace admin console under Security → API controls → App access control, revoke it and treat any credentials that passed through Context.ai workflows as potentially exposed. Vercel notes the Context.ai compromise "potentially affected hundreds of users across many organizations." You are almost certainly not the only downstream victim if you used it.

Why this matters beyond Vercel

The Vercel-specific story is real and serious. The ecosystem-level story is bigger. Three points.

The attack vector is going to repeat. Every AI tool your team installed in the last twelve months asked for Google Workspace, GitHub, Notion, Slack, or Linear OAuth access. Developers approve these prompts reflexively. Each one is a potential Context.ai. The rapid proliferation of AI tools across developer and operational workflows in the last eighteen months has dramatically expanded the footprint of third-party integrations holding OAuth grants. Every new AI tool added to a Workspace becomes another potential initial access vector for your company. The bar for "what gets approved" has dropped at exactly the moment when the blast radius of a compromised approval has grown. That mismatch is the structural vulnerability. Vercel was one instance of it. There will be more.

"Significantly accelerated by AI" is a claim worth taking seriously, and holding carefully. Rauch's exact words, in his April 19 thread: "We believe the attacking group to be highly sophisticated and, I strongly suspect, significantly accelerated by AI. They moved with surprising velocity and in-depth understanding of Vercel." This is a CEO's personal assessment in the immediate aftermath of a breach, not a forensic finding from Mandiant. Treat it that way. But it is not an outlandish claim either. The pattern Rauch is pointing at (attackers moving faster, understanding targets more deeply, enumerating systems with the kind of efficiency that used to require long manual reconnaissance) is consistent with what multiple threat intelligence teams have observed in recent incidents. The attacker economics are shifting. What used to take two weeks of manual enumeration may now take hours. If that is what happened here, and Vercel's forensic partners eventually confirm it, this incident is a preview of what the median breach will look like by the end of the year.

When your AI supplier gets breached, your defenders learn about it on your timeline, not theirs. Vercel found the compromise, identified the upstream vendor, published an IOC, and engaged Mandiant, all on their own clock. Downstream customers of Context.ai who were not Vercel are in a much harder position: they have to discover they were compromised before they can respond to it. The trust model of OAuth-connected AI tools assumes your supplier will detect and disclose quickly enough for you to act. That assumption has not been stress-tested well, and this incident is the stress test. This is a structural problem with how AI tools are integrated into modern developer workflows, not a problem with any one vendor's communication practices.

The 72-hour action plan

If you do nothing else after reading this, do these things. The order matters.

1. Google Workspace, right now.

Open your Admin console: Security → API controls → App access control. Search your OAuth app inventory for 110671459871-30f1spbu0hptbs60cb4vsmv79i7bbvqj.apps.googleusercontent.com. If it appears, revoke it. While you are there, review every OAuth app with sensitive scopes: gmail.readonly, calendar, drive, admin.directory. Revoke anything with broad scopes and low usage. This is the single highest-leverage action in this list.

2. If you are a Vercel customer.

Check your account activity log for the e xposure window, with a conservative lower bound of April 1 through today. Rotate every environment variable containing secrets that were not marked as sensitive: API keys, tokens, database credentials, signing keys. Treat them as potentially exposed. Mark all of them sensitive going forward. If you used the Vercel-Linear integration, grep your Linear workspace for pasted secrets. Linear issues are a known place where developers paste credentials during debugging.

3. GitHub, personal and organization.

Review the OAuth apps installed on your personal account and your organization. Audit the GitHub organization audit log for the exposure window. If you stored npm tokens in Vercel environment variables, rotate them at npmjs.com immediately. If you publish npm packages, run npm view <your-package> time --json and look for unexpected versions. Compare the tarball of any recent publish to the git tag it claims to come from.

4. Enumerate every AI tool with OAuth scopes.

Across Google Workspace, Microsoft 365, GitHub, Slack, Notion, and Linear, list every AI tool your team has installed in the last twelve months. For each, ask one question: if this tool gets compromised tomorrow, what is the blast radius? If the answer is "bad," that is a risk register item this week. Consider moving new OAuth grants from user-driven approval to an allowlist with security sign-off. This is the structural fix. Everything else is a rotation.

5. Package managers and release paths.

If you publish npm packages, freeze automatic dependency merges for seven to ten days. Diff your main branch HEAD against the last known-good commit before April 1. Check GitHub Actions run history for unexpected runs, especially manual workflow_dispatch triggers or runs from branches that no longer exist. Look at release and tag creations you did not make. This is where a Vercel-initial compromise could theoretically become a supply chain event for your product, even though Vercel has confirmed their own release path is clean.

6. Stress-test your rotation muscle.

The real gap this incident exposes is operational. If your team cannot rotate a critical secret end-to-end (including every downstream consumer that validates against it) in under thirty minutes, that is the thing to fix after you finish this checklist. Incidents happen on their schedule, not yours.

The OpenSourceMalware incident response playbook on GitHub goes deeper on each of these items and is being actively updated. Bookmark it. Juliet.sh has also published a thorough analysis that handles the epistemics carefully.

The architectural lesson

Everything above is a 72-hour fix. The architectural lesson is bigger, and it is what you should be rethinking over the next quarter. Three principles.

OAuth scope minimalism is now load-bearing. Every AI tool should have the smallest OAuth scope that lets it function. The problem is that most AI tools ask for broad scopes because broad scopes let them ship features faster. Read-only Gmail access is harder to build against than full Gmail access. Deployment-level scopes are easier to work with than project-scoped ones. Every time a vendor takes the easier path, their customers inherit the blast radius. Push back. Decline OAuth grants that demand more than the feature requires. Treat "we need broad scopes to provide a good experience" as a sales objection to answer, not a technical requirement to accept.

Secrets should be encrypted by default, not encrypted on request. Vercel's sensitive environment variable flag is opt-in. That is a design choice, and a defensible one in 2020. In 2026, given everything we now know about how AI tools broaden the attack surface, opt-in encryption for secrets is a liability. The right question to ask your deployment platform is not "can I encrypt secrets?" Everyone says yes. The right question is "what is encrypted by default, and what does an attacker who takes over a team account get to read?" If the answer to the second question is "any environment variable not explicitly flagged sensitive," you have a gap. Fix it, or plan for the incident.

Concentration of trust is a security property, not just a procurement preference. When the same vendor holds your deployment platform, your environment variables, your DNS, your CI, your secrets manager, and has OAuth access into your Google Workspace, one compromise cascades. The industry has spent a decade consolidating developer tooling onto fewer platforms in the name of velocity. That was the right tradeoff for velocity. It is not necessarily the right tradeoff for security. Separation of concerns across infrastructure vendors slows some things down. It also ensures that a breach at one vendor does not cascade into a breach of your entire stack. That tradeoff is worth having as an explicit conversation on your team, not an implicit assumption.

How CreateOS thinks about this

I will be direct. We cannot claim to be breach-proof. Nobody who understands security can make that claim. What we can talk about is architecture, because architecture is where the specific risks that produced this incident either get designed in or designed out.

CreateOS runs on NodeOps distributed infrastructure rather than a single centralized control plane. That is a deliberate choice about where trust concentrates. Managed services (PostgreSQL, MySQL, Kafka, Valkey) are provisioned per project, not shared across tenants in ways that create cross-customer blast radius. One-click deployment is positioned with "no configuration files to manage, no accidental API key leaks, and zero server overhead" as defaults, not opt-in hardening. Every deployment runs through automatic security scanning as part of the default path.

On the AI-native side, which is where this incident matters most: our agents interact with your infrastructure through MCP with scoped API keys. They do not sit behind OAuth tokens with broad scopes across unrelated tools. The vector that produced the Vercel incident (a third-party AI tool holding Workspace-level OAuth scopes) is not a shape we accept in our own design. That is not because we are smarter. It is because we built this after the industry learned, painfully, that OAuth scope sprawl was a problem.

What we can honestly claim is this: the specific risks that produced the Vercel incident, namely overbroad OAuth scopes from AI tools, opt-in encryption on customer secrets, and single-vendor concentration of trust across deployment, DNS, CI, and secrets, are not concentrated in our architecture the way they are in the pre-AI-native deployment stack. If you want to compare architectures point-for-point, the CreateOS vs Vercel comparison lays it out without the marketing. You should still ask us hard questions. Any vendor that tells you their architecture is safe should be answering hard questions.

If this changed how you think

If this changed how you think about deployment architecture, the fastest way to try a different one is the migration guide at nodeops.network/createos/migrate-from/vercel. Most teams finish the move in fifteen minutes with zero downtime. It walks you through configuration mapping, environment variable import, build command translation, and DNS cutover.

If you prefer to do it from your terminal, install the migration skill directly:

npx skills add https://github.com/NodeOps-app/skills --skill vercel-to-createos

This is not a "drop Vercel, switch to us" pitch. Vercel has great engineers and real infrastructure and the incident does not change that. It is an invitation to compare architectures seriously, so that the next time this pattern plays out (and it will) you are not the team writing your own post-mortem.

Closing

Vercel will get through this. They have capable engineers, named Mandiant on day one, and disclosed publicly with their CEO's name attached to a thoughtful statement that distinguished confirmed from unconfirmed. That is better incident response than most companies manage. Respect the work.

The structural issue is bigger than Vercel, and it will be playing out across our industry for the next two years. AI tools are not going away. OAuth is not going away. The combination of the two is going to keep producing incidents like this one until the industry designs the risk out rather than rotating around it.

The teams that build on AI-native infrastructure from the start, with scope minimalism, default-encrypted secrets, and distributed trust baked into the architecture rather than retrofitted onto it, are the teams that will be writing someone else's post-mortem in 2027, not their own.

Build accordingly.

About NodeOps

NodeOps unifies decentralized compute, intelligent workflows, and transparent tokenomics through CreateOS: a single workspace where builders deploy, scale, and coordinate without friction.

The ecosystem operates through three integrated layers: the Power Layer (NodeOps Network) providing verifiable decentralized compute; the Creation Layer (CreateOS) serving as an end-to-end intelligent execution environment; and the Economic Layer ($NODE) translating real usage into transparent token burns and staking yield.

Website | X | LinkedIn | Contact Us